Deploy Quanton on AKS

This guide walks through deploying the Quanton Operator on a new AKS cluster from scratch — from creating the cluster to running your first Spark job.

Prerequisites

- Azure CLI installed and logged in

- Helm >= 3.x

- kubectl installed

onehouse-values.yamldownloaded from the Onehouse console

Resource and cluster setup

Step 1: Log in and set your subscription

az login

List available subscriptions and set the one you want to use:

az account list --output table

az account set --subscription "<subscription-name-or-id>"

Step 2: Register required providers

If this is a fresh subscription, register the AKS and compute providers:

az provider register --namespace Microsoft.ContainerService

az provider register --namespace Microsoft.Compute

Check registration status before proceeding:

az provider show --namespace Microsoft.ContainerService --query registrationState

az provider show --namespace Microsoft.Compute --query registrationState

Wait until both return "Registered".

Step 3: Create a resource group

az group create --name quanton-test-rg --location westus2

Use any region — westus2, eastus, westeurope, etc.

Step 4: Create the AKS cluster

az aks create \

--resource-group quanton-test-rg \

--name quanton-aks \

--kubernetes-version 1.33.0 \

--node-count 2 \

--node-vm-size Standard_D4s_v3 \

--generate-ssh-keys

This takes ~5 minutes. To check available Kubernetes versions in your region:

az aks get-versions --location westus2 --output table

Use a version that lists KubernetesOfficial in the SupportPlan column to avoid requiring a Premium tier cluster.

Step 5: Configure kubectl

az aks get-credentials --resource-group quanton-test-rg --name quanton-aks

kubectl get nodes

You should see 2 nodes in Ready state.

Install the operators

Step 6: Install the Spark Operator

The Quanton Operator extends the kubeflow Spark Operator — install it first:

helm repo add spark-operator https://kubeflow.github.io/spark-operator

helm repo update

helm install spark-operator spark-operator/spark-operator \

--namespace spark-operator \

--create-namespace \

--set "spark.jobNamespaces={default}"

Verify it's running:

kubectl get pods -n spark-operator

Step 7: Install the Quanton Operator

helm upgrade --install quanton-operator oci://registry-1.docker.io/onehouseai/quanton-operator \

--namespace quanton-operator \

--create-namespace \

--set "quantonOperator.jobNamespaces={default}" \

-f /path/to/onehouse-values.yaml

Verify the pods are running (may take ~30–60 seconds to initialize):

kubectl get pods -n quanton-operator

Expected output once ready:

NAME READY STATUS RESTARTS AGE

dp-proxy-deployment-xxxx-xxxxx 1/1 Running 0 60s

quanton-controller-xxxx-xxxxx 3/3 Running 0 60s

If pods show PodInitializing or 0/1, wait a moment and re-run the command.

Submit and monitor job

Step 8: Submit a test job

kubectl apply -f https://raw.githubusercontent.com/onehouseinc/quanton-operator/main/examples/quanton-application.yaml

Confirm the application was created:

kubectl get quantonsparkapplications -n default

Monitor the driver pod (may take 2–3 minutes while the Quanton image is pulled):

kubectl get pods -A | grep driver

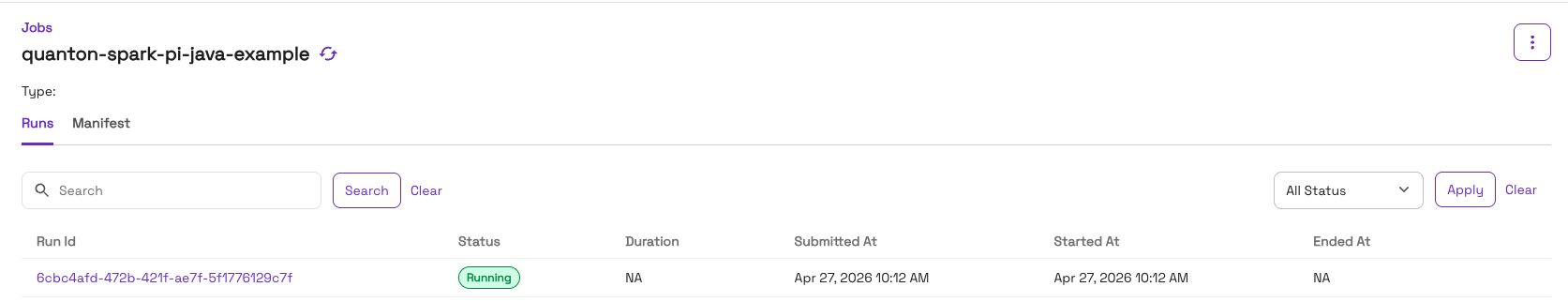

You can also track the job in the Onehouse console under Jobs:

Once running, check the output:

kubectl logs -f quanton-spark-pi-java-example-driver | grep -i "pi is"

Expected output:

Pi is roughly 3.141592...

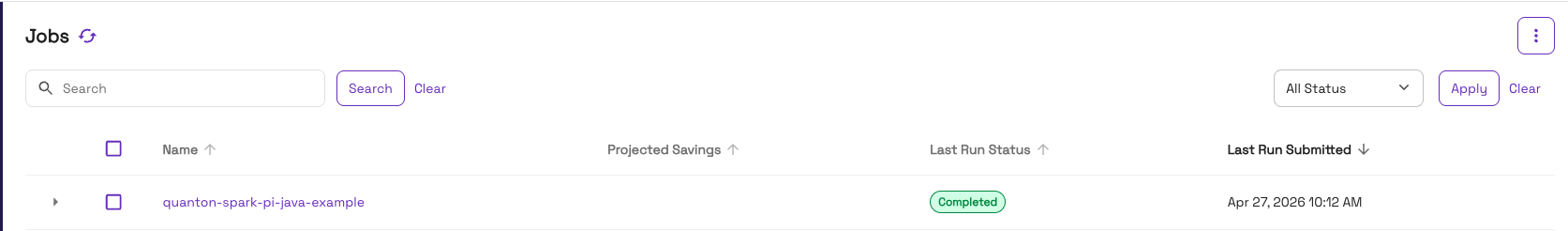

Once the job finishes, the console will show it as Completed:

Troubleshooting

ImagePullBackOff on quanton-controller

If kubectl get pods -n quanton-operator shows ImagePullBackOff on the quanton-controller pod, check the events:

kubectl describe pod -n quanton-operator <quanton-controller-pod-name> | grep -A 30 "Events:"

A TLS handshake timeout pulling from dist.onehouse.ai can indicate the AKS nodes can't reach the Onehouse image registry — or may be a transient failure that resolves on retry. First wait a minute and re-check pod status. If it persists, verify connectivity from inside the cluster:

kubectl run nettest --image=busybox --restart=Never -- \

sh -c "wget -qO- https://dist.onehouse.ai 2>&1 || true"

kubectl logs nettest

kubectl delete pod nettest

AKS clusters use a standard load balancer for outbound internet access by default — if you've customized networking (private cluster, custom NSGs, egress firewall), you'll need to allowlist the required endpoints. See Network Configuration for the full list.

Checking the image pull secret

The Helm chart creates an image pull secret from your onehouse-values.yaml. Verify it exists:

kubectl get secret -n quanton-operator | grep onehouse

If the secret is missing, your onehouse-values.yaml may be missing the imagePullSecrets.accessToken field — download a fresh copy from the Onehouse console.

Cleanup

When you're done, delete the resource group to remove all resources:

az group delete --name quanton-test-rg --yes --no-wait

Next steps

- Running Jobs — submit your own

QuantonSparkApplicationresources - Azure integration reference — ADLS Gen2 access, node pools, and production setup